Whether it is distinguishing between edible and poisonous plants that look similar or separating trash from recyclables, categorization has always been an important ability for humans.

Learning which items belong to what category based on basic visual features increases our perceptual sensitivity. That is, differences between physically similar but categorically distinct objects become enhanced; correspondingly, differences between physically similar objects in the same category become reduced.

Neuroscientists, including Thomas Sprague, Edward Ester and John Serences, suspected these biases may be based in the brain’s occipitoparietal cortex, an area which helps us integrate information from our environment as detected through our senses, including vision.

To test this hypothesis and get a better idea of how people learn to categorize information in the world around them, the researchers carried out three experiments.

Their findings are detailed in a study published in the Journal of Neuroscience.

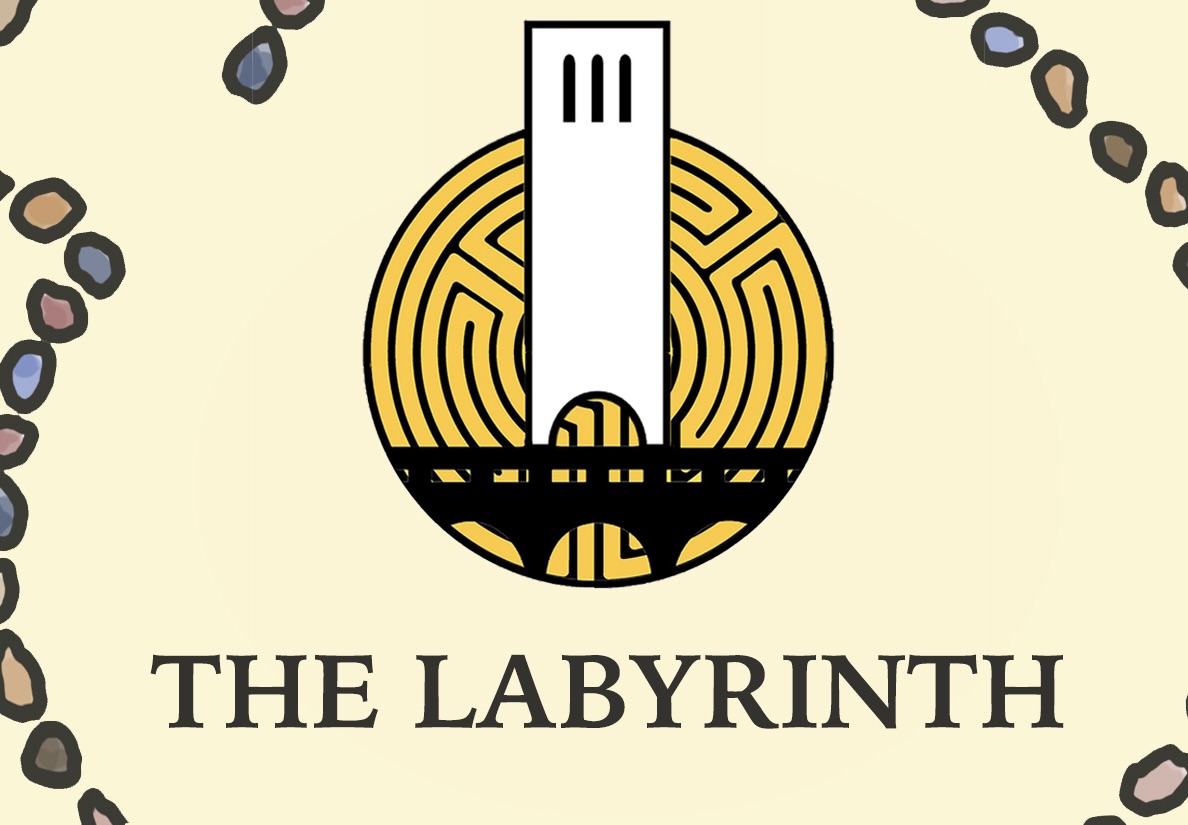

Through trial and error, the study’s participants learned to associate stimuli with specific category labels “very quickly … within five to 10 minutes of practice,” said Sprague, an assistant professor in UC Santa Barbara’s Department of Psychological and Brain Sciences and a faculty member in the Interdepartmental Graduate Program in Dynamical Neuroscience (DYNS). The participants were trained to associate bars in various orientations — clockwise or counterclockwise with respect to a randomly selected stimulus orientation on a computer monitor — as belonging to one of two arbitrary categories.

“What we then wanted to do was see if that learning actually changes how the visual system is processing information,” said Ester, lead author of the study and an assistant professor in Florida Atlantic University’s Department of Psychology.

The researchers tested categorization based on object orientation and spatial location using functional magnetic resonance imaging (fMRI), which measures brain activity in different brain regions. They complemented this method with another commonly used technique, electroencephalogram (EEG). Providing better temporal specificity than fMRI, EEG could show how quickly the categorized neural representations emerged.

“That tells us what different stages of processing might be influenced,” Ester explained.

The results appeared to indicate that the effects of categorization were “basically immediate,” according to Sprague. “As soon as you’re engaged in a task and sensory input comes, it’s already assigned a category and this impacts the neural representation,” he said.

The scientists also performed other experiments to ensure that their findings were based on categorization and not due to alternative explanations, such as motor planning or response selection.

A: In experiment 1, participants viewed iso-oriented bars assigned one of 15 unique orientations from 0° to 168° in each trial. B: The researchers designated two arbitrary catgories by randomly choosing a stimulus orientation as a category boundary and assigning the seven orientations counterclockwise to Category 1 and the seven orientations clockwise to Category 2. Courtesy of Edward Ester

Additionally, they modeled the brain responses of various neural populations to see how visual orientations activate different brain regions. Using the patterns of brain activity, the models generated neural representations of the stimuli subjects were viewing, allowing the researchers to decode or predict what subjects were looking at in a given trial.

The brain activity patterns that emerged differed based on the orientation of the stimuli.

“Our central question was does learning how to categorize these stimuli or does the intent to try and place them in different categories actually influence those patterns of activity such that stimuli that are from the same category become more similar to one another?” Ester described.

Indeed, the collaborators found that, as a group, brain activity patterns evoked by stimuli from the same category appeared more similar to one another. They became more distinct than patterns of activity prompted by stimuli from the other category.

Furthermore, the study reports that the generated representations appeared more like an exemplar or prototype from a category rather than the actual physical stimulus itself.

This suggests that in categorizing a stimulus, relatively early visual areas begin to process it in a way that corresponds more to its category membership instead of its physical attributes.

“That’s something that’s relatively surprising, because it’s long been thought that the visual system is a label-blind system that responds in very specific ways to certain types of stimuli,” Ester said. “And those types of responses are immutable. They’re not really selective or they can’t really be changed by intent — but we find that they, in fact, can.”

The representations also correlated with participants’ categorization performance. A stronger effect in the biasing of stimuli or change in activity pattern arose when participants correctly categorized the stimuli than when they didn’t.

The paper provides insight into how categorizing processes may influence information processing at the earliest stages of the visual system in the occipitoparietal cortex.

“Maybe the most important finding from this study is it provides some intriguing insights into a debate about whether categorical decision making is something that happens kind of at an abstract level, say, [in the later stages of the visual processing system such as in the] parietal cortex or frontal cortex, where you’re reasoning about vertical sensory information,” Sprague stated. “This is saying, ‘Well, actually, there’s information in as early as the primary sensory cortex that is already fundamentally different based on a very rapidly learned arbitrary category.’”

“It really gives us a window into how previous knowledge and learning can actually shape ongoing visual processing and do so in a way that’s helpful or optimal, that helps you to solve a particular problem or perform a particular task,” Ester added.

Ester is next interested in “really trying to drill down and understand the different types of changes in the brain that are happening when you look at category effects.”

He hopes to do this by examining categorical effects at the levels of individual neurons or small populations of neurons.

“Right now what we’re measuring is, in many ways, a very abstract picture on how the brain might be processing information,” Ester said. “What we’d ideally like to do is adopt a more reductionist approach and get a better, higher-resolution picture on the types of changes that are happening in the brain when people learn to categorize this information.”

The study was funded by the National Institutes of Health and a James S. McDonnell Foundation grant awarded to Serences.

And why, exactly, is this psychology and not, say, neurobiology?