One of my earliest experiences questioning my ability to distinguish natural and synthetic content on social media was when I clicked on a link to a YouTube Short I received from my mom. The video showed a large rat colony swarming Trafalgar Square in London, wading through murky puddles outside St. Paul’s Cathedral and the National Gallery. “London, just saying,” she texted judgmentally in our family group chat.

My Canadian mother holds a longstanding and impenetrable grudge against England. On a social media platform where algorithmic feedback loops already reinforce our personal belief systems, this obscure AI-generated video fueled her vendetta. I texted to highlight how the rats were morphing into one another at the foreground of the frame: One rat’s face would contort into its own rear, pairs would collide and amalgamate into one and limbs would randomly transition into tails. “Oh…” my mom replied 30 minutes later, “I’m doomed.”

But this was over a year ago, and as the percentage of AI-generated content on social media rapidly grows, my own ability to identify generated content has begun to falter. 71% of social media content was AI generated as of March 2025, with a forecasted increase to 90% throughout this year.

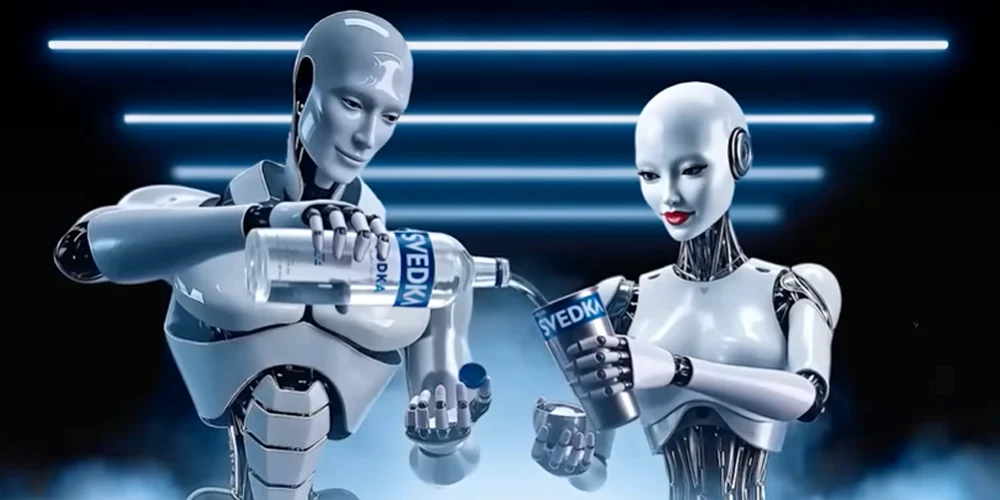

AI social media content can range from virtual models, like H&M’s digital twins clothing campaign which duplicated real human models to create doppelgangers, to an entirely AI-generated Svedka vodka commercial recently televised during Super Bowl LX. Watching the big game with my friends, we collectively agreed that the ad was our least favorite and mysteriously uncomfortable. Only in researching AI advertisements for this article did I realize it was also 100% generated. In utilizing AI for advertisement, companies can cut production costs by 90-99%: the cost per minute of AI-generated video production ranges from $0.50 to $30, while freelance video production is $1,000 to $5,000 and agency production can demand upwards of $50,000 per minute.

Courtesy of Svedka

Certain AI social media content is elevated in absurdity. Last summer, I found myself in the unfortunate circumstance of employment as a camp counselor during the height of “Italian brainrot,” a series of synthetically generated surrealist creatures that fused animals with inanimate objects and recited nonsensical Italian phrases.

These recurrent memes that captivated elementary audiences nationwide fall into a content category colloquially dubbed “AI slop”; inexpensive and homogenized low-quality imagery that’s criticized for its automation of the artistic process, reduction of creative variety and production of content without culture.

From time to time I’ve been guilty of mindless indulgence in such content. After an evening on Del Playa Drive, I’ve scrolled through AI-generated food videos on Instagram Reels: An Italian grandmother presenting a steaming tray of colorful Tuscan dishes, a bed built from a larger-than-life soft pretzel, assortments of glass fruits sliced into satisfying cross-sections or an anthropoid baby soup dumpling eating spoonfuls of its own dough. Gross, but strangely mesmerizing.

As absurd as these videos may be, recent studies suggest that such AI content outperforms human-generated video engagement on social media. In the absence of a creative process guided by human impulse and emotion, these uncanny animations can still sometimes elicit specific and innately human responses. Their apparent impossibility evokes an anxious uncertainty about one’s own reality.

Mirror neurons and anthropomorphism can cause us to feel empathy toward robots and animations proportionate to their degree of human likeness — but at precise levels of similarity, our response to humanlike objects descends into uneasiness and revulsion. This uncanny valley effect offered an evolutionary advantage as Homo sapiens competed against other early-human species likeNeanderthals. The sudden shift toward fear and aversion you may experience when exposed to synthetic social media content is a lingering early warning system against the “almost human.” This unsettling effect occurs primarily in the ventromedial prefrontal cortex: a bottom surface region of the brain heavily involved in value judgments and social cognition.

I’ve been encountering the uncanny valley effect in increasing frequency. Last month I discovered a song I loved as a TikTok audio and saved it to my winter quarter Spotify playlist. Days later, I froze mid-doomscroll when a video appeared of the same singer, Sienna Rose, feebly denying allegations that she was AI-generated herself.

“So I keep seeing these comments everywhere, ‘she’s AI,’” the musician gossips to the camera. “Well … I feel real.” Only a hiss of white noise in the background of her entire discography finally confirmed her AI-generated identity for me: this hiss is a common error inserted by automatic sound-layering technology, along with other signature traits of generative music like inconsistent drum patterns, generic verse-chorus structures, suspiciously positive lyrics and an absence of live performances.

But as generative technology improves, synthetic art grows harder to identify. A couple of months ago, my friend scrolled past a video of a 6-month old baby scaling a boulder with expertise and upper body strength. An avid rock climber herself, my friend swallowed a twinge of bitterness: “This baby’s already better at climbing than me,” she joked to her boyfriend of her own jealousy. But this envy was quickly replaced with embarrassment as he gently reminded her, “Babies can’t rock climb.” There’s a blurred division between content that shares fragments of our lives versus what simply attempts to mirror it, and the unreal feels alarmingly human.

When separation becomes this subtle, the impact extends beyond art and music into the political sphere. The same technologies that blur artistic authorship can also fuel propaganda campaigns, bot swarms that reinforce echo chambers and distort social science data and derogatory deepfakes deployed for political messaging. Attention to detail and vigilance toward patterns are essential to filter through content and maintain an authenticity radar.

Late at night watching Khan Academy videos in the library, YouTube interrupts my study session with a video ad of AI-generated grilled chicken. I switch tabs to search what time the library opens tomorrow morning and skim the Gemini summary (7 a.m., btw). I scroll Pinterest while the ad wraps up, but my screen is filled with synthetic faces, living spaces and sketchbook pages. My eyeballs are dry from blue light and I’m burnt out from playing “Real or fake?”

Tiring as it may be, I remind myself that staying persistent in the pursuit of art and knowledge — stubbornly human interests — is what keeps my authenticity radar finely tuned.

Late one evening I was reading a forum discussion about online spinning games and decided to give it a try. The first attempts were calm and didn’t change the balance much. While scrolling through the comments I noticed https://vegas-hero.gr/ mentioned and players from Greece were talking about their sessions there. I kept going and tried one slightly stronger round, and suddenly the numbers jumped higher than expected. That small surprise made the whole session much more entertaining.